Abstract

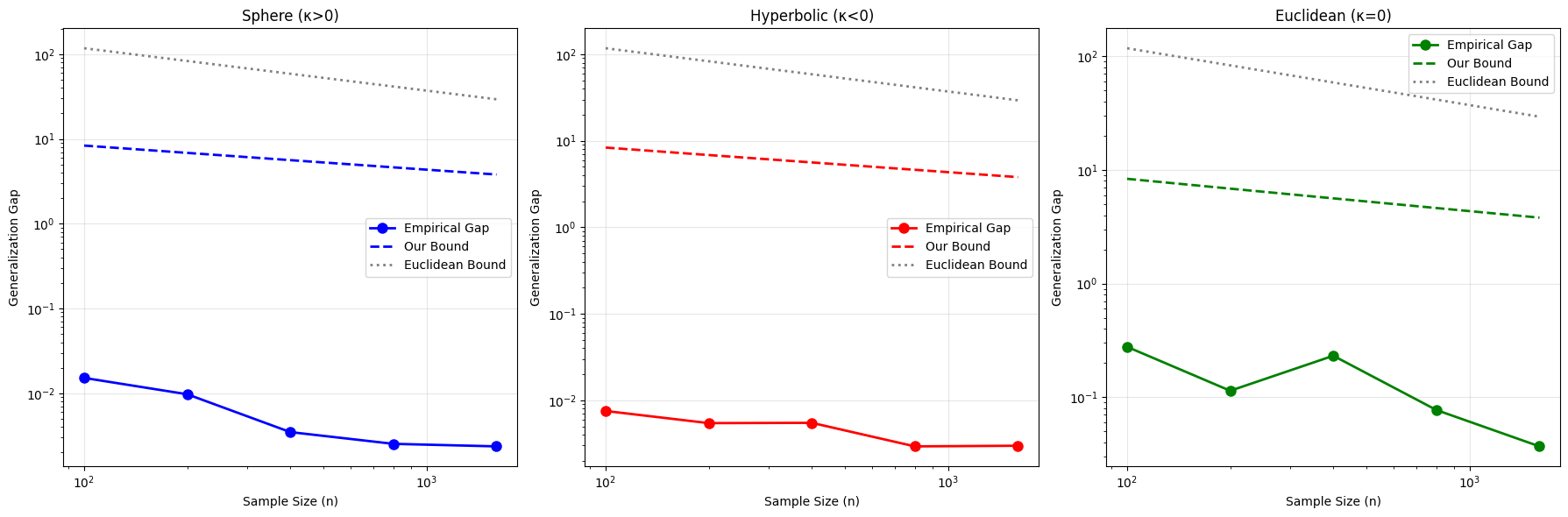

In this work, we develop new generalization bounds for neural networks trained on data supported on Riemannian manifolds. Existing generalization theories often rely on complexity measures derived from Euclidean geometry, which fail to account for the intrinsic structure of non-Euclidean spaces. Our analysis introduces a geometric refinement: we derive covering number bounds that explicitly incorporate manifold-specific properties such as sectional curvature, volume growth, and injectivity radius. These geometric corrections lead to sharper Rademacher complexity bounds for classes of Lipschitz neural networks defined on compact manifolds. We illustrate the tightness of our bounds in negatively curved spaces, where exponential volume growth leads to provably higher complexity, and in positively curved spaces, where curvature acts as a regularizing factor.

Theoretical Framework

We consider a compact, smooth Riemannian manifold \((\mathcal{M}, g, \mu)\) with sectional curvature bounded as \(\kappa_{\min} \leq \sec \leq \kappa_{\max}\). Our goal is to analyze the generalization properties of neural networks \(f: \mathcal{M} \to \mathbb{R}\) from a class \(\mathcal{F}\) of \(L\)-Lipschitz functions.

Manifold Covering Number

For \(\epsilon < \frac{1}{2}\text{inj}(\mathcal{M})\), the covering number of the manifold with respect to geodesic distance satisfies:

Using Bishop-Gromov volume comparison, we capture how curvature constrains the density of points required to cover the manifold.

Curvature-Adaptive Generalization Bound

With probability at least \(1 - \delta\), for all \(f \in \mathcal{F}\), the generalization error is bounded by:

where the curvature penalty \(\psi(\kappa, L) = \sqrt{|\kappa|}/L\) captures the complexity increase due to negative curvature.

Key Insights

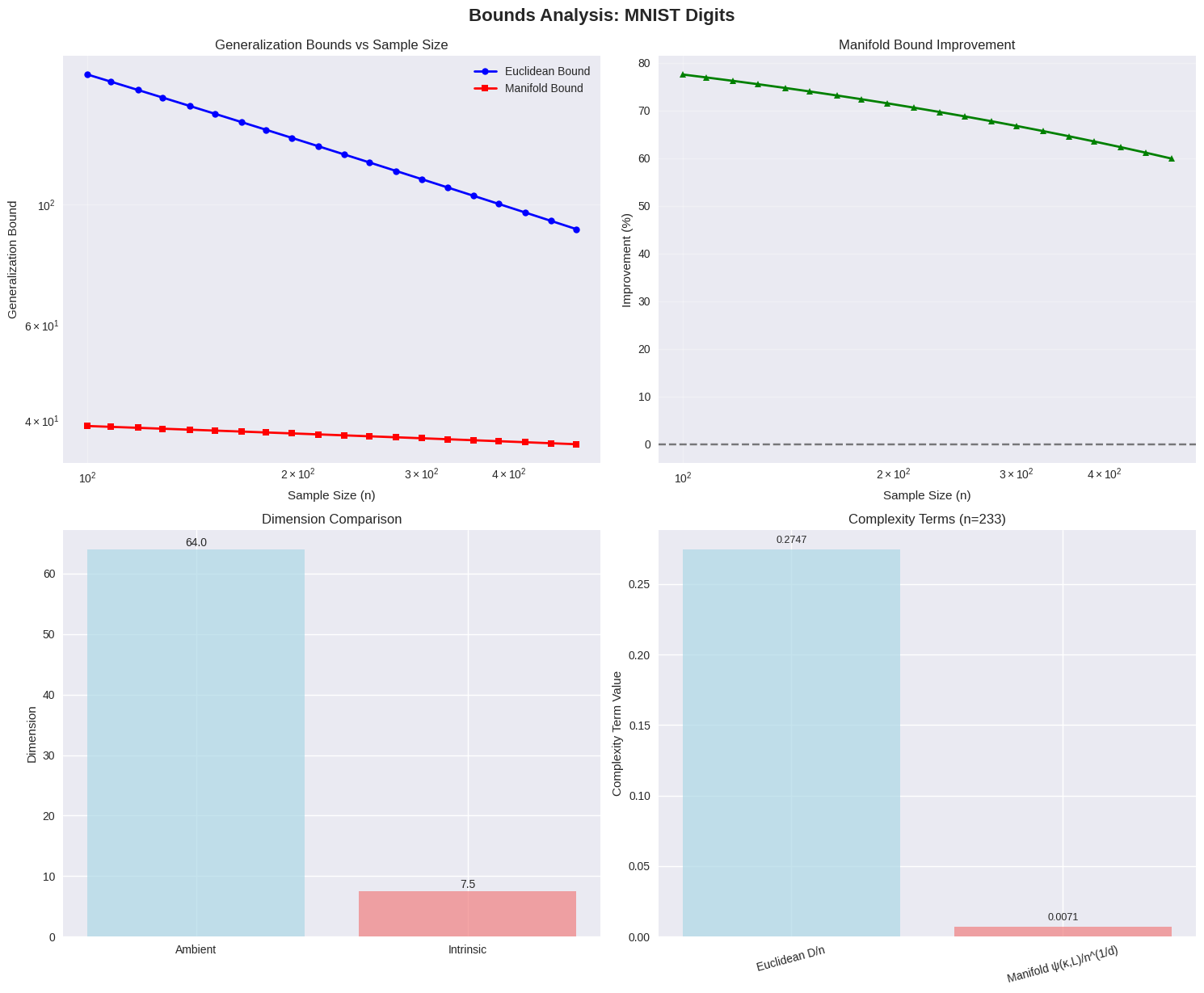

- Intrinsic Dimension vs. Ambient Dimension: Our bounds scale with the intrinsic dimension \(d\) of the manifold rather than the ambient Euclidean dimension \(D\), providing much tighter guarantees for data on low-dimensional manifolds.

- Curvature as a Complexity Driver: Negatively curved spaces (hyperbolic) exhibit exponential volume growth, leading to higher covering numbers and thus higher sample complexity. Conversely, positive curvature (spherical) acts as a natural regularizer.

- Geometric Refinement: By incorporating the injectivity radius and sectional curvature, we recover classical Euclidean results as a special case when \(\kappa = 0\).

Experimental Validation

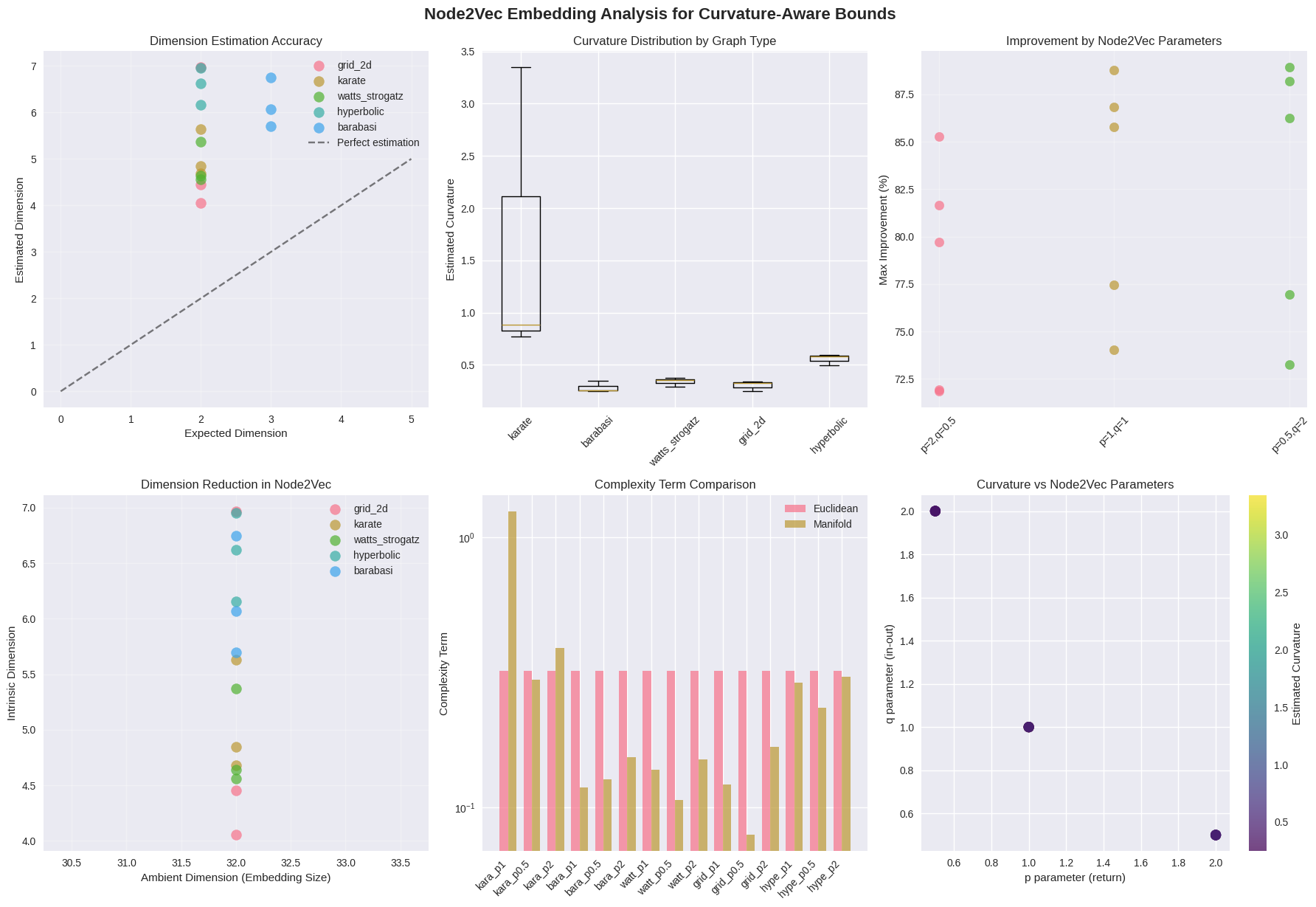

We empirically validate our curvature-dependent generalization bounds through synthetic manifold experiments and real-world data embeddings.

Real-World Embedding Geometry

| Dataset | Ambient \(D\) | Intrinsic \(d\) | Curvature \(\kappa\) | Improvement over Euclidean |

|---|---|---|---|---|

| Swiss Roll | 3 | 2.1 | 0.2553 | 91.2% |

| MNIST Digits | 64 | 7.5 | 0.0146 | 77.5% |

| Noisy Sphere | 3 | 2.3 | 6.9138 | 89.5% |

Conclusion

This work bridges the gap between differential geometry and statistical learning theory. By deriving generalization guarantees that explicitly account for the Riemannian structure of the data domain, we provide a more principled understanding of how intrinsic geometry affects the learning capacity of neural networks. Our findings have significant implications for geometric deep learning, particularly in choosing appropriate latent space geometries for structured data.